Description

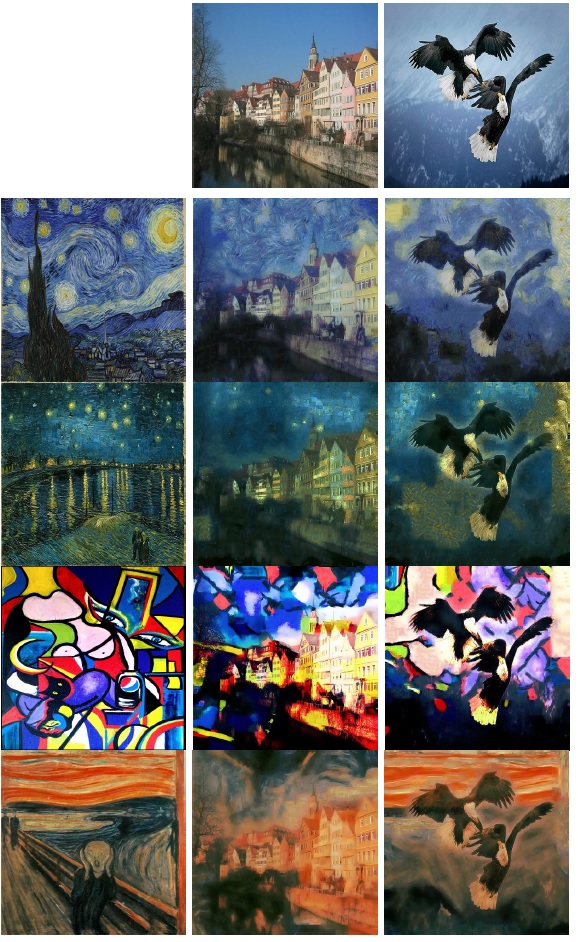

We have shown that it is possible to achieve artistic style transfer within a pure image processing paradigm. This is in contrast to previous work that utilized deep neural networks to learn the difference between “style” and “content” in a painting.

We leverage the work by Kwatra et. al. on texture synthesis to accomplish “style synthesis” from our given style images, building off the work of Elad and Milanfar. We have also introduced a novel “style fusion” concept that guides the algorithm to follow broader structures of style at a higher level while giving it the freedom to make its own artistic decisions at a smaller scale.

Our results are comparable to the neural network approach while improving speed and maintaining robustness to different styles and contents.

Reviews

There are no reviews yet.