Description

American Sign Language (ASL) is one of the main forms of communication among the deaf communities in United States and Canada. This motivates us to develop a translator that can recognize hand symbols and give us the corresponding meaning as output.

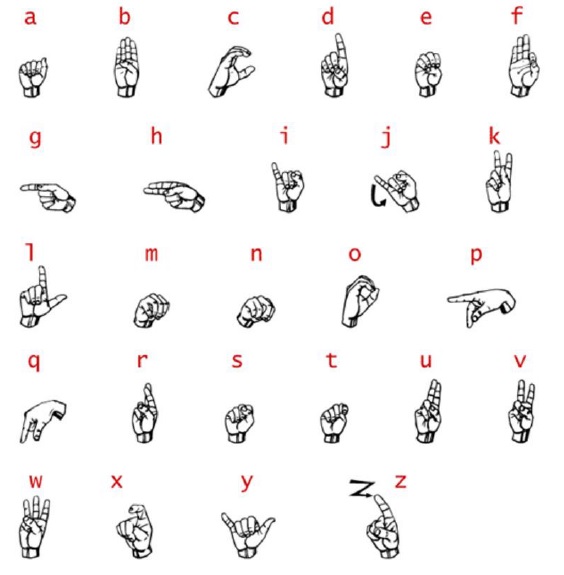

A subset of ASL is the American Manual Alphabet – referred to as fingerspelling – which is used to spell out the 26 different letters of the English language using unique hand gestures. A graphic illustrating this system is found in figure 1. Of these symbols, 24 are static hand postures – the exceptions being ’j’ and ’z’. In order to avoid excessive complexity, this project will restrict itself to the reduced 24-letter alphabet excluding ’j’ and ’z’.

In this project, we develop a real time ASL Finger spelling translator using image processing and supervised machine learning.

For classification we have considered the simple yet powerful, non-parametric K-nearest neighbors (KNN) algorithm which is built into MATLAB. In the training phase, this algorithm just stores the feature vectors (for each or a combination of the above feature extraction techniques) along with corresponding class labels for each training example (data-set hand image) that is shown to it.

In the classification (prediction) phase, it assigns that class label which is most common among the K nearest neighbors of the test image’s feature vector. Other methods such as Multiclass SVM and Discriminant Analysis were also considered. Although they gave comparable or sometimes even better accuracy on training set as compared to KNN, they offered much lower test errors as compared to KNN.

Analyzing Domestic Abuse using Natural Language Processing on Social Media Data

Ira –

Quite Professional.