Description

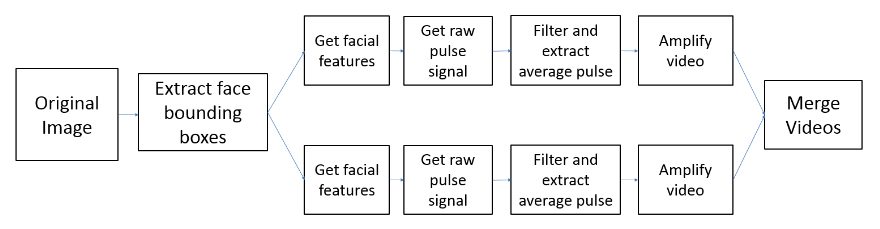

The goal of this project is to create a visualization of heart rate in videos with multiple subjects. Previous work [1] has created visualizations of heart rate for single subject videos. Other work has automatically detected heart rate from recordings of single faces [2]. Here we attempt to combine these into the ability to detect multiple faces and amplify them individually around a narrow range to emphasize each individuals heart rate, and then combine the videos back together to produce a visualization where relative heart rates are visible.

Flowchart of processing for each video. Videos are split up into subvideos for each face and then recombined at the end to form a multifrequency amplified video.

The most difficult part of extracting heartbeats proved to be discerning the actual signal from other forms of noise. We suspect other physiological signals present within roughly the same frequency played a large part in this. Most notably, our breathing and blinking both fell within these ranges, especially when aliased. By eliminating potential noise sources, we could remedy each accordingly and move to more uncontrolled situations.

Towards practical applications, more work could be done to explore the extent to which this could be applied to larger crowds. Investigating the ability to perform these operations on a surveillance camera would be of great interest. Specifically, we would explore how performance drops off with lowering resolution, or bits of information per pixel. Additional challenges would be to explore the functionality of algorithms on partially occluded faces.

Finally, concerning visualization, we feel that other forms of amplification could be explored. Since the MIT Eulerian algorithm amplifies both color and displacement variations, other methods may prove to be more intuitive for conveying pulse. For instance, changing the coloration or contrast of an individual’s face could work.

https://matlab1.com/autoencoders-of-raw-images-in-tensorflow/

https://matlab1.com/jupyter-using-it-and-its-great-functions/

Reviews

There are no reviews yet.