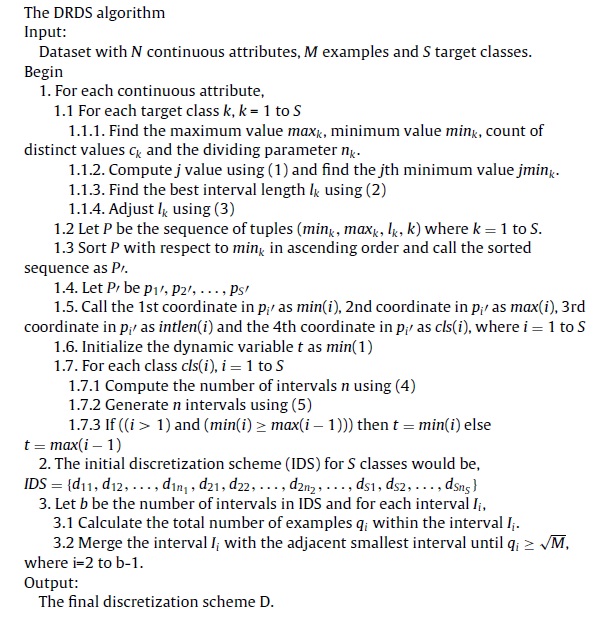

Description

MATLAB code of the following paper is ready for download :

Augasta, M. Gethsiyal, and T. Kathirvalavakumar. “A new discretization algorithm based on range coefficient of dispersion and skewness for neural networks classifier.” Applied Soft Computing 12.2 (2012): 619-625.

This Code is with two example on two dataset.

Abstract :

In this paper we propose a new static, global, supervised, incremental and bottom-up discretization algorithm based on coefficient of dispersion and skewness of data range. It automates the discretization process by introducing the number of intervals and stopping criterion. The results obtained using this discretization algorithm show that the discretization scheme generated by the algorithm almost has minimum number of intervals and requires smallest discretization time. The feedforward neural network with conjugate gradient training algorithm is used to compute the accuracy of classification from the data discretized by this algorithm. The efficiency of the proposed algorithm is shown in terms of better discretization scheme and better accuracy of classification by implementing it on six different real data sets.

A newly developed discretization method is presented. It discretizes the continuous attributes based on range coefficient of dispersion and skewness of data and hence the proposed method is discretization based on range coefficient of dispersion and skewness of data (DRDS).

The quality of the discretization is measured by two parameters, namely classification accuracy and number of discretization intervals [22]. More discretization intervals always fewer the classification

errors and lower the cost of data discretization [23]. The DRDS method has two phases. The first phase interested only in minimizing the classification errors, resulting more intervals in initial discretization scheme (IDS) and the second phase interested in minimizing the number of intervals without affecting the classification accuracy by merging the intervals in IDS. From the phase 2, the final discretization scheme (FDS) is obtained.

references :

[1] J. Han, M. Kamber, Data Mining: Concepts and Techniques, Morgan Kaufman,2001.

[2] K.J. Cios, L.A. Kurgan, CLIP 4: hybrid inductive machine learning algorithm that generates inequality rules, Information Science 163 (2004) 37–83.

[3] P. Clark, T. Niblett, The CN2 algorithm, Machine Learning 3 (1989) 261–283.

[4] L.A. Kurgan, K.J. Cios, CAIM discretization algorithm, IEEE Transactions on knowledge and Data Engineering 16 (2004) 145–152.

[5] C.J. Tsai, C.I. Lee, W.P. Yang, A discretization algorithm based on class-attribute contingency coefficient, Information Sciences 178 (2008) 714–731.

[6] R. Butterworth, D.A. Simovici, G.S. Santos, L.O. Machado, A greedy algorithm for supervised discretization, Biomedical Informatics 37 (2004) 285–292.

[7] U.M. Fayyad, K.B. Irani, Multi-interval discretization of continuous-valued attributes for classification learning, in: Proc. of Thirteenth Int. Conf. on Artificial Intelligence, 1993, pp. 1022–1027.

[8] P. Soman, S. Diwakar, V. Ajay, Insight into Data Mining, Prentice Hall of India, 2006.

[9] R. Kerber, ChiMerge: discretization of numeric attributes, in: Proc. of Ninth Int. Conf. on Artificial Intelligence, 1992, pp. 123–128.

[10] H. Liu, R. Setiono, Feature selection via discretization, IEEE Transactions on Knowledge and Data Engineering 9 (1997) 642–645.

[11] F. Tay, L. Shen, A modified chi2 algorithm for discretization, IEEE Transactions on Knowledge and Data Engineering 14 (2002) 666–670.

[12] C.T. Su, J.H. Hsu, An extended chi2 algorithm for discretization of real value attributes, IEEE Transactions on Knowledge and Data Engineering 17 (2005) 437–441.

[13] Q. Wu, D.A. Bell, T.M. McGinnity, G. Prasad, G. Qi, X. Huang, Improvement of decision accuracy using discretization of continuous attributes, in: Proc. of the Third Int. Conf. on Fuzzy Systems and Knowledge Discovery, Lecture Notes in Computer Science, 4223, 2006, pp. 674–683.

[14] S. Cohen, L. Rokach, O. Maimon, Decision-tree instance-space decomposition with grouped gain-ratio, Information Sciences 177 (2007) 3592–3612.

[15] R.R. Yager, An extension of the naive Bayesian classifier, Information Sciences 176 (2006) 577–588.

[16] K. Kaikhah, S. Doddmeti, Discovering trends in large datasets using neural network, Applied Intelligence 29 (2006) 51–60.

[17] S. Ozekes, O. Osman, Classification and prediction in datamining with neural networks, Electrical and Electronics Engineering 3 (2003) 707–712.

[18] H. Lu, R. Setiono, H. Liu, Neurorule: a connectionist approach to data mining, in: Proc. of VLDB’95, 1995, pp. 478–489.

[19] Z.H. Zhou, Y. Jiang, S.F. Chen, General neural framework for classification rule mining, International Journal of Computers, Systems and Signals 1 (2000) 154–168.

[20] H. Dam, H.A. Abbass, C. Lokan, X. Yao, Neural based learning classifier systems, IEEE Transactions on Knowledge and Data Engineering 20 (2008) 26–39.

[21] F. Moller, A scaled conjugate gradient algorithm for fast supervised learning, Neural Networks 6 (1990) 525–533.

[22] X. Liu, H. Wang, A discretization algorithm based on heterogeneity criterion, IEEE Transactions on Knowledge and Data Engineering 17 (2005) 1166–1173.

[23] R. Jin, Y. Breitbart, C. Muoh, Data discretization unification, Knowledge and Information Systems 19 (2009) 1–29.

[24] S.C. Gupta, V.K. Kapoor, Fundamentals of Mathematical Statistics, Sultan Chand & Sons, New Delhi, 2001.

[25] J. Alcalá-Fdez, L. Sánchez, S. García, M.J. del Jesus, S. Ventura, J.M. Garrell, J. Otero, C. Romero, J. Bacardit, V.M. Rivas, J.C. Fernández, F. Herrera, KEEL: a software tool to assess evolutionary algorithms to data mining problems, Soft Computing 13 (3) (2009) 307–318.

Reviews

There are no reviews yet.