Description

In this project, we presented a new framework to find the global optimum of graph partitioning problems. The basic building blocks of our method are:

(1) Linearizing a ratio problem to get the landa question (Hochbaum, 2010).

(2) Convex message passing algorithm with a bound on the objective function (Weiss et al., 2007).

(3) Efficient MAP inference with high order potentials (Tarlow et al., 2010).

(4) Tightening linear programming relaxation using conditioning. (Rother et al., 2007, Boros et al., 2006).

By using tools from graphical models we were able to efficiently answer the landa question and provably find the global optimum for fractional graph partitioning problems.

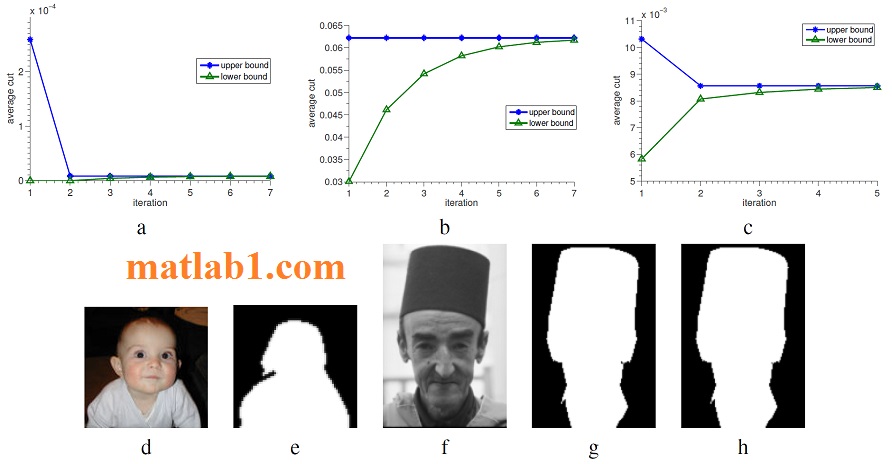

For some of the experiments we have conducted, the simple conditioning algorithm we have used did not tighten the LP relaxation enough: our convex BP method converged to beliefs with “ties”. Specifically, when we conducted experiments on larger images (e.g. 240×160 pixels, 38k nodes) conditioning on only few (up to 10) variables was not enough and we got beliefs with ties. We plan to deal with these cases by adapting ideas from Sontag et al. (2008) who tighten the relaxation by adding an explicit treatment for “frustrated cycles” (i.e. 3). In our problems, we have found that no frustrated cycles exist between nodes that correspond to datapoints, so we need to modify the algorithm in Sontag et al. (2008) to deal with the high order potential. We also plan to improve our conditioning and probing techniques (Rother et al., 2007).

Image Segmentation. The lower and upper bounds on the optimal solution for average cut at each iteration of the bisection algorithm, for the (a) original and (b) resized baby images and (c) ’a man with a hat’ image. (d) Baby input image (e) Segmentation result on the resized image (f) ’A man with a hat’ input image. Segmentation results using: (g) the spectral method (h) our method .

http://mlg.eng.cam.ac.uk/zoubin/talks/lect2gm.pdf

Mezuman, Elad, and Yair Weiss. “Globally optimizing graph partitioning problems using message passing.” Artificial Intelligence and Statistics. 2012.

Reviews

There are no reviews yet.