Description

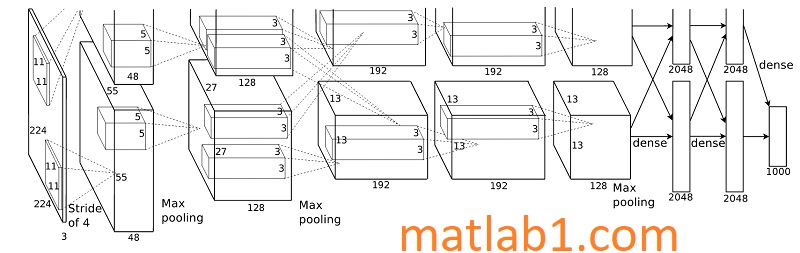

AlexNet is the first publication that started a wide interest in deep learning for computer vision. Krizhevsky et al. (https://papers.nips.cc/paper/4824-imagenet-classification-with-deep-convolutional-neural-networks.pdf) proposed AlexNet and it has been a pioneer and influential in this field. This model won the ImageNet 2013 challenge.

The error rate was 15.4%, which was significantly better than the next. The model was relatively a simple architecture with five convolution layers.

The challenge was to classify 1,000 categories of objects. The image and data had 15 million annotated images with over 22,000 categories.

Out of them, only a 1,000 categories are used for the competition. AlexNet used ReLU as the activation function and found it was training several times faster than other activation functions. The architecture of the model is shown here:

The paper also used data augmentation techniques such as image translations, horizontal flips, and random cropping. The dropout layer prevents overfitting. The model used vanilla Stochastic Gradient Descent (SGD) for training. The parameters of SGD are chosen carefully for training. The learning rate changes over a fixed set of training iterations. The momentum and weight decay take fixed values for training. There is a concept called Local Response Normalization (LRN) introduced in this paper. The LRN layers normalize every pixel across the filters to avoid huge activation in a particular filter.

This layer is not used anymore as recent research suggests that there is not much improvement because of LRN. AlexNet has 60 million parameters in total.

Reviews

There are no reviews yet.