Description

One of the disadvantages of common neural networks such as MLP is that if we increase the number of hidden layer neurons or the number of neural network layers, two main problems arise:

- Low training speed

- Stuck in the local minimum

One of the alternative networks to solve these two problems is deep belief networks or DBN.

In addition to categorization tasks, deep belief networks can be used as a feature extraction method.

One of the advantages of deep belief networks in learning is the feature that is explained in this educational video. In this method, with the help of unlabeled data, high-level features of educational data can be extracted and the power of differentiation between different categories in the data can be increased.

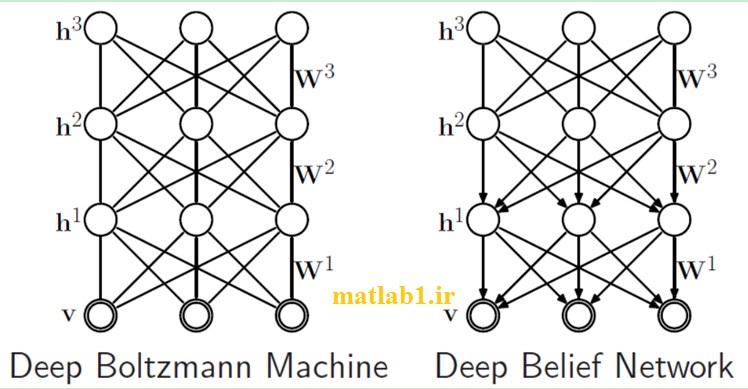

Deep belief networks are made up of layers called finite Boltzmann machines. In fact, by stacking finite Boltzmann machines, deep belief networks can be created for hierarchical processing.

Reviews

There are no reviews yet.