Description

This training video is the fourth part of the complete course of deep learning in MATLAB.

This section is dedicated to implementing and programming deep learning models in MATLAB.

In 2020, MATLAB renamed its neural network toolbox to Deep Learning. This change shows MATLAB’s high motivation to provide a strong toolkit for its users.

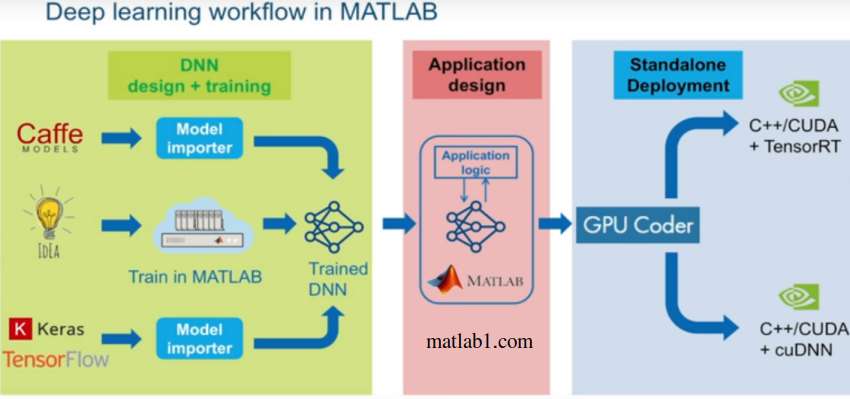

Deep Learning workflow in MATLAB

Deep learning in MATLAB provides you with a convenient tool for designing and implementing deep neural networks with pre-trained algorithms and models. You can use convolutional neural networks (ConvNet, CNN) and long-term short-term memory (LSTM) for image classification and regression and time series and textual data.

You can create network structures such as generative adversarial networks (GANs) and Siamese networks using automatic differentiation and custom training loops and split weights.

With the Deep Network Designer tool, you can automatically design, analyze, and train deep neural networks without coding.

Using the Experiment Manager tool, you can manage multiple in-depth learning experiences, track training parameters in each experience, analyze results, compare production codes, and select the best model. This tool gives users a great ability to perform a version control task. In a real deep learning task, finding the right parameters for a model is very time-consuming and it is necessary that the parameters are saved each time the code is executed to finally find the best parameter by comparing different performances. You can easily do this with the help of the experience management tools.

Experiment Manager tool MATLAB

With the deep learning tool, you can view the deep learning model and see the different layers and conversion functions of each layer. So you can easily see the structure of your model and get a good understanding of the deep learning model.

With MATLAB Deep Learning Toolbox, you can create connections with other deep learning programming tools such as TensorFlow™ and PyTorch. Python programmers now use TensorFlow ™ and PyTorch, so you can import models built into these libraries into MATLAB and use them as a MATLAB model. The communication format is ONNX, which can import models from TensorFlow-Keras and Caffe into MATLAB.

This toolbox supports Transfer Learning with DarkNet-53, ResNet-50, NASNet, SqueezeNet and other pre-trained models. As stated in the Deep Learning Tutorial video, this feature prevents you from inventing the wheel from scratch and easily creating advanced models and achieving very high efficiencies.

Using in-depth learning in MATLAB, you can streamline your model training process on one GPU or multiple GPUs. This feature is done with the help of MATLAB parallel processing toolbox. You can also use the cloud, including NVIDIA®GPU Cloud and Amazon EC2®GPU. This feature is done with the MATLAB Parallel Server toolbox.

Headlines :

Pre-trained networks

Add-On Explorer tool

Install a deep learning network in MATLAB

Call AlexNet in MATLAB

Comparison of pre-trained deep learning networks in terms of computational speed and volume and accuracy

Important points in choosing a deep learning model

Start programming with a simple code

Layers parameter

Print the structure of a network

Calculate the appropriate size of the input image

Resize the input image

Names of different network layers

Parameters of each network layer

Extract the first layer parameters

Extract the parameters of the next layers

FilterSize, NumChannels and Stride

Learnable Parameters

Pooling layer parameters

Class maxPooling2dLayer

Depth, size, number of parameters and image size of squeezenet, googlenet, inceptionv3, densenet201, mobilenetv2, resnet18, resnet50, resnet101, xception, inceptionresnetv2, shufflenet, nasnetmobile, nasnetlarge, darknet19, darknet53, alexnet, vgg16

Install googlenet

Check MATLAB version

Check googlenet layers

Network output class names

Introducing Caffe models

Introducing App Network Designer

Work with deep learning in MATLAB without any programming

Network Design Tool Start Page

Designer options

Introducing the Layer Library

Auto arrange

Introduction Analyze

Find design errors and warnings

Introduction Export

Colors of each layer

Upload data

Validation data and Training data

Augmentation options

Change training data

Determine the percentage of validation data

An example of transitional learning

Set the fully connected layer

Set the Output layer

Report from network checks

Training adjustment options

Select the training function or solver

Introducing different parts of the training window

Accuracy, Loss function

Graphs from training

Reasons to stop training

Training Cycle

Hardware resource

Export option

Example

Get output from the trained network

What can you do with the Deep Learning Network Building Tool in MATLAB?

How much data is needed in transitional learning?

Benefits of Transfer Learning

An example of Transfer learning

NumFilters option

ValidationFrequency

MaxEpochs

Generate code with basic parameters

Predict command

Structure of deep learning networks

What parameter depends on the type and number of layers?

Differences between classification and regression layers

Softmax layer

A small or large network

The concept of sequential

Define the layers of a network in code

ImageInputLayer layer

Build complex networks

Concept of Directed Acyclic Graph (DAG)

LayerGraph command

AddLayers command

ConnectLayers command

MATLAB programming example

View network structure with plot

training options in programming

Solver types in sgdm, adam and rmsprop training

TrainNetwork command

Implement training of a deep learning network

TrainingCycle option

Stop the training process

Epoch concept

Final point

Extract weight and bias from the trained network

Apply test data

Example of categorization with CNN network

How to enter data into MATLAB?

How to define the structure of a network?

How to train the network?

How to apply test data to the network?

Introducing imageDataStore

Benefits of imds

How to identify all images in a folder without reading all images in MATLAB?

Fullfile command

Example from imageDataStore

Extract an image from imds

Command countEachLabel

Readimage command

Divide the data into two parts: training and testing with MATLAB commands

Confusion matrix

Detect network errors

Channels in network layers

Convolution layer training

The concept of the filter in the convolution layer and filterSize

Stride in the convolution layer

Downsampling concept

Number of weights in a filter

Dilated type convolution

DilationFactor

Concept Feature Map

The formula for the number of parameters of a convolution layer

Zero Padding

The formula for the number of neurons

Output size formula

Batch normalization layer

Advantages of normalization in deep learning

The optimal position of the normalization layer

ReLU layer theory

Active activation layers

Leaky layer ReLU

Clipped layer ReLU

Normalization layer along the channel

Pooling training max

Average pooling training

Pooling example

Pooling task

Dropout layer

Fully Connected layer

Reasons to use Softmax in category output

Familiarity with the deep learning layers available in MATLAB 2020

Input layers

Sequence input

The concept of ROI

2D convolution layer

3D channel layer

Convolution layer grouped

Transposed channel layer

Layer fullyconnected

Layer sequence

LSTM layer and bidirectional LSTM layer and GRU layer

The concept of flatten in deep learning

Global pooling layer

2D unpooling layer

Combined layers

Collector layer

Concatenation layer

Weight collector layer

Object identification layers

GAN layer

Pixel classification layer

A practical example of identifying an object in front of a webcam connected to a computer

Identify sunglasses, pens, and mouse with in-depth learning

A practical example of Transfer Learning

Change deep output network output classes

Specify a name for each layer

ImageDataAugmenter command

Command augmentedImageDataStore

Example of face recognition

Example of diagnosis of corona disease

Experience management tools

Experiment Manager

Comparison of deep learning models

Version control in MATLAB

Optimizing the parameters of a deep learning model

Create an experiment

Experiment Browser section

Specify the variable parameter

Define an Experiment

Hyperparameter Table

Setup Function

Test several deep learning networks together

Sort the results of experience management

Reviews

There are no reviews yet.