MULTISENSOR FUSION

Sensor fusion involves combining data from several sensors to obtain better information for perception. Humans and animals process multiple sensory data to reason and act and the same principle is applied in multi-sensor data fusion. Multisensor fusion combines data from different sensors into a common representation format . In developing robotic systems, multi-sensor fusion plays a crucial role since interaction with the environment is instrumental in successful execution of the task. Significant applications of multi-sensor fusion can be found in applications such as mobile robots, defense systems (such as target tracking), medicine , transportation systems and industry . The motivation for sensor fusion is discussed in section (1) Section (2) describes the various types of sensor fusion proposed in literature.

1.Sensor Fusion Categories

Depending upon the sensor configuration, there are three main categories of sensor fusion: Complementary, Competitive and Co-operative . These are described below as follows: a) Complementary In this method, each sensor provides data about different aspects or attributes of the environment. By combining the data from each of the sensors we can arrive at a more global view of the environment or situation. Since there is no dependency between the sensors combining the data is relatively easy . b) Competitive In this method, as the name suggests, several sensors measure the same or similar attributes. The data from several sensors is used to determine the overall value for the attribute under measurement. The measurements are taken independently and can also include measurements at different time instants for a single sensor. This method is useful in fault tolerant architectures to provide increased reliability of the measurement . c) Co-operative When the data from two or more independent sensors in the system is required to derive information, then co-operative sensor networks are used since a sensor individually cannot give the required information regarding the environment. A common example is stereoscopic vision . Several other types of sensor networks exist such as corroborative, concordant, redundant etc. Most of them are derived from the above mentioned sensor fusion categories. Dasarthy classified sensor fusion types depending upon the input/output characteristics. Figure 1, shows the various sensor fusion types. Only a few combinations are allowed in Dasarthy’s scheme for the inputs and outputs.

Figure 1 : Dasarthy’s classification of multi-sensor fusion

2.Sensor Fusion Topologies

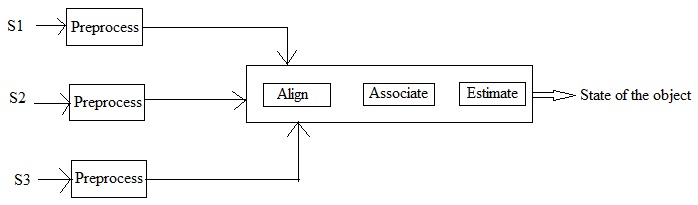

There are different topologies namely, Centralized, Decentralized and Hybrid . Each of these is described as follows: a) Centralized Architecture In this architecture, a single node handles the fusion process. The sensors undergo preprocessing before they are sent to the central node for the fusion process to take place. Figure 2 shows a typical centralized architecture . b) Decentralized Architecture In this architecture, each of the sensor processes data at its node and there is no need for a global or central node. Since the information is processed individually at the node, it is used in applications that are large and widespread such as huge automated plants, spacecraft health monitoring etc. . Figure 3 shows a typical decentralized architecture . c) Hierarchical Architecture This architecture is a combination of both centralized and distributed type. When there are constraints on the system such as a requirement of less computational workload or limitations on the communication bandwidth, distributed scheme can be enabled. Centralized fusion can be used when higher accuracy is necessary . A simple comparison between the centralized and decentralized topologies is shown below in Table 1 .

Figure 2: Centralized Topology

Figure 3. Decentralized Topology

Table 1: Centralized and Decentralized topologies

can you please provide the matlab code for deploying fixed sensors and a fusion center in a network, such that sensor data will be collected by the fusion center to make a final global decision.